Loom Technical Deep Dive: eBPF, K8s, and Enterprise Observability

When you let an AI agent write and execute code in your environment, you've just introduced something fundamentally different from traditional software. It's not a bug—it's a feature that requires a completely new observability approach.

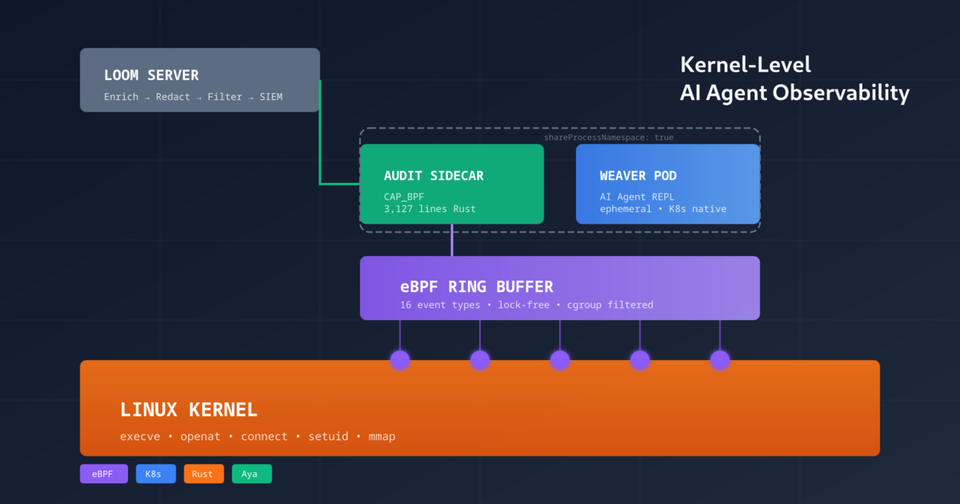

This post dives deep into how Loom implements kernel-level observability for AI agents using eBPF, Kubernetes-native ephemeral compute, and enterprise-grade audit infrastructure.

Architecture Overview

Loom's observability stack consists of three layers: kernel-level eBPF tracing, a userspace sidecar that processes events, and a server that aggregates, enriches, and routes audit data.

execve, openat, connect, setuid"] ring["Ring Buffer

Lock-free event queue"] ebpf --> ring end subgraph pod["Weaver Pod"] weaver["Weaver Process

AI Agent REPL"] sidecar["Audit Sidecar

CAP_BPF + cgroup filter"] ring --> sidecar sidecar -->|"PID namespace

shared"| weaver end subgraph server["Loom Server"] audit["AuditService

Enrich → Redact → Filter"] sinks["Sinks: SQLite, Syslog,

HTTP, OpenTelemetry"] sidecar --> audit audit --> sinks end

eBPF Implementation: ~5,000 Lines of Pure Rust

The eBPF layer is implemented using Aya, a pure-Rust eBPF framework. No C, no LLVM dependency for end users—just Rust all the way down.

| Crate | Lines | Purpose |

|---|---|---|

loom-weaver-ebpf/ |

1,297 | Tracepoint programs (execve, openat, connect) |

loom-weaver-ebpf-common/ |

646 | Shared event types with compile-time size assertions |

loom-weaver-audit-sidecar/ |

3,127 | Userspace: loader, buffer, client, filter, DNS cache |

Event Types: 16 Categories

The eBPF programs capture 16 distinct event types, each with a fixed-size struct for efficient ring buffer transport:

Each event carries a 32-byte header with timestamp, PID, TID, UID, and GID—captured at the kernel level with nanosecond precision.

#[repr(C)]

pub struct EventHeader {

pub event_type: u32, // EventType enum

pub timestamp_ns: u64, // bpf_ktime_get_ns()

pub pid: u32,

pub tid: u32,

pub uid: u32,

pub gid: u32,

}

// Compile-time assertion: exactly 32 bytes

const _: () = assert!(core::mem::size_of::<EventHeader>() == 32);

Sandbox Escape Detection

SandboxEscapeEvent detects 9 categories of container/sandbox escape attempts—critical for AI agents that might be manipulated into malicious behavior.| Escape Type | Syscalls Monitored | Risk |

|---|---|---|

Namespace |

unshare, setns | Container breakout via namespace manipulation |

Seccomp |

prctl, seccomp | Disabling syscall filters |

Ptrace |

ptrace | Process injection, debugging other processes |

ModuleLoad |

init_module, finit_module | Kernel module insertion |

Mount |

mount, umount2 | Filesystem escape |

ProcSys |

open(/proc/, /sys/) | Sensitive kernel interface access |

Container |

Various | Docker/containerd-specific escapes |

Bpf |

bpf() | Agent trying to load its own eBPF |

PerfEvent |

perf_event_open | Performance monitoring abuse |

Cgroup Filtering: Only Capture Target Container

The critical challenge with eBPF tracing is filtering. Without filtering, you'd capture every syscall on the host. Loom uses cgroup-based filtering to scope capture to just the AI agent's container:

// In eBPF program (main.rs)

fn should_capture_event() -> bool {

// Get current task's cgroup ID

let cgroup_id = unsafe { bpf_get_current_cgroup_id() };

// Check against configured target cgroup

match TARGET_CGROUP_ID.get(0) {

Some(target) => cgroup_id == *target,

None => false, // No target configured, capture nothing

}

}

#[tracepoint]

pub fn sys_enter_execve(ctx: TracePointContext) -> u32 {

if !should_capture_event() {

return Ok(());

}

// ... capture event

}

This means the eBPF programs are always loaded, but they only emit events for the specific container running the AI agent—zero noise from the rest of the system.

Kubernetes Provisioner: Ephemeral Compute

Weaver pods are ephemeral compute units—they exist only for the duration of an AI coding session. Kubernetes is the source of truth; there's no separate database for pod state.

Pod Security Hardening

Every weaver pod runs with strict security constraints:

apiVersion: v1

kind: Pod

metadata:

name: weaver-abc123

labels:

loom.io/weaver-id: "abc123"

loom.io/user-id: "user-xyz"

spec:

shareProcessNamespace: true # Sidecar can see weaver's PIDs

terminationGracePeriodSeconds: 30

containers:

- name: weaver

image: ghcr.io/loom/weaver:latest

securityContext:

runAsNonRoot: true

readOnlyRootFilesystem: true

allowPrivilegeEscalation: false

capabilities:

drop: [ALL]

resources:

limits:

memory: "2Gi"

cpu: "2"

- name: audit-sidecar

image: ghcr.io/loom/audit-sidecar:latest

securityContext:

capabilities:

add: [BPF, PERFMON] # Required for eBPF

drop: [ALL]

env:

- name: LOOM_AUDIT_SERVER_URL

value: "https://loom.example.com"

- name: LOOM_AUDIT_TARGET_PID

value: "1" # Weaver is PID 1 in shared namespace

CAP_BPF and CAP_PERFMON to load eBPF programs, but the weaver container itself has all capabilities dropped.Native Sidecar Support (K8s 1.28+)

With Kubernetes 1.28+, Loom uses native sidecar containers (restartPolicy: Always in init containers). This guarantees the audit sidecar starts before the weaver and runs until the pod terminates—no race conditions, no missed events at startup.

Enterprise Features

SIEM Integration: 60+ Audit Event Types

The audit system supports multiple output sinks for enterprise security tooling:

Session, Org, GeoIP"] redact["Redact

PII, Secrets"] filter["Filter

Severity, Type"] end subgraph sinks["Output Sinks"] sqlite[("SQLite

Local storage")] syslog["Syslog

RFC 5424"] splunk["Splunk

HEC"] datadog["Datadog

HTTP"] elastic["Elastic

Bulk API"] otel["OpenTelemetry

OTLP"] end sources --> enrich --> redact --> filter --> sinks

| Sink | Format | Use Case |

|---|---|---|

| SQLite | JSON | Local storage, debugging |

| Syslog | RFC 5424 + CEF | Traditional SIEM (QRadar, ArcSight) |

| HTTP | JSON | Splunk HEC, Datadog, custom webhooks |

| OpenTelemetry | OTLP | Cloud-native observability stacks |

SCIM Provisioning

RFC 7643/7644 compliant SCIM enables automatic user provisioning from enterprise IdPs:

- Automatic provisioning — Users added in Okta/Azure AD automatically appear in Loom

- Automatic deprovisioning — Disabled users have sessions revoked immediately

- Group-to-Team mapping — IdP groups sync to Loom teams

- Full PATCH support — Incremental updates, not full replace

Feature Flags with Kill Switches

The feature flag system includes emergency kill switches that can instantly disable features across all users:

Key capabilities:

- Per-org and platform-level flags

- Multi-variant experiments with exposure tracking

- Environment-scoped config (dev/staging/prod)

- Real-time SSE updates to all connected SDKs

- Stale flag detection (not evaluated in 30 days)

ABAC: Defense in Depth

Attribute-Based Access Control with multiple authorization layers:

┌─────────────────────────────────────────────────┐

│ Layer 1: Route-level middleware │

│ RequireCapability, RequireRole │

│ → Reject unauthorized requests early │

├─────────────────────────────────────────────────┤

│ Layer 2: Handler-level authorize! macro │

│ Fine-grained, resource-specific checks │

│ → Context-aware authorization decisions │

├─────────────────────────────────────────────────┤

│ Layer 3: Audit logging at both layers │

│ All grants and denials logged │

│ → Security monitoring and compliance │

└─────────────────────────────────────────────────┘

Verified vs. Inferred

| Component | Status | Evidence |

|---|---|---|

| eBPF programs | Verified | 1,297 lines with #[tracepoint] macros |

| Event types (16) | Verified | Compile-time size assertions in code |

| Audit sidecar | Verified | 3,127 lines across 12 modules |

| Cgroup filtering | Verified | should_capture_event() in main.rs |

| K8s provisioner | Spec | weaver-provisioner.md |

| SIEM sinks | Spec | audit-system.md |

| SCIM provisioning | Spec | scim-system.md (RFC compliant) |

| Feature flags | Spec | feature-flags-system.md |

Conclusion

Loom's approach to AI agent observability represents a fundamental shift from traditional APM. By implementing kernel-level tracing with eBPF, it captures what actually happens rather than what the application reports. Combined with Kubernetes-native ephemeral compute and enterprise-grade audit infrastructure, it provides the visibility needed to trust AI agents with real work.

The key insight: AI agents operate in a fundamentally different trust model than traditional applications. You don't just need to know when they crash—you need to know what they're doing, every syscall, every network connection, every file access. Loom makes that visible.